Backups are important. While they may not be mission critical, they are certainly business critical. The difference between being mission critical and business critical for a company and its backup system is that you could potentially go a few hours, a few days, a few weeks, dare I say even longer without having a backup. As long as your servers keep running and no one is deleting anything they shouldn’t be, no corruption of data in any way is occurring, you’re not targeted with the latest data encryption hacking attempt and <insert one of thousand of scenarios where you would need to restore from a backup>, your users and your company will happily keep on running, blissfully ignorant of their imperceptible fragility.

And then you need to restore something. And you can’t.

In a recent engagement with a client, I was challenged with a Synology NAS failure, which was connected to a VMware environment. On the Synology was a very large iSCSI LUN presented to the ESXi hosts which had this LUN formatted as a datastore. On the datastore was a virtualized backup server as well as the backup files themselves inside the guest operating system. This environment had been running fine, until the Synology NAS failed. It would blink its power light and its alert light for about 5 minutes and then shut itself off. The device was still under warranty and Synology sent out a replacement.

When the replacement arrived, the drives were pulled from the existing Synology unit and moved to the new unit. There was a procedure during boot of the new unit where it will see that an existing configuration is in place on the disks and import it. It appeared at this point that everything was going well, and we were well on our way to having our VMware datastore and backup server up and running.

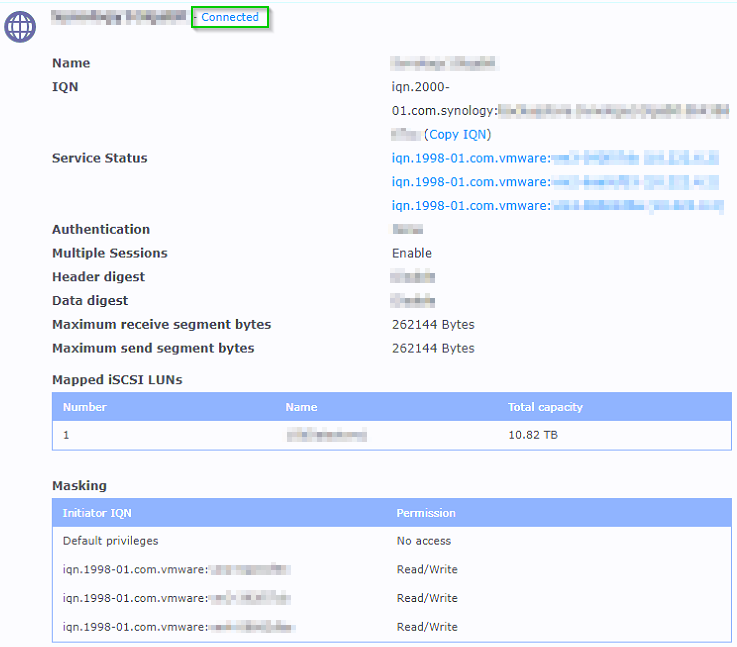

However when that process completed, the VMware ESXi hosts did not have the datastore mounted. From Synology’s perspective, everything looked great. The iSCSI target was connected. The iSCSI LUN was mapped, and the 3 ESXi hosts were masked appropriately to have Read/Write access. However, the datastore was not showing in the hosts.

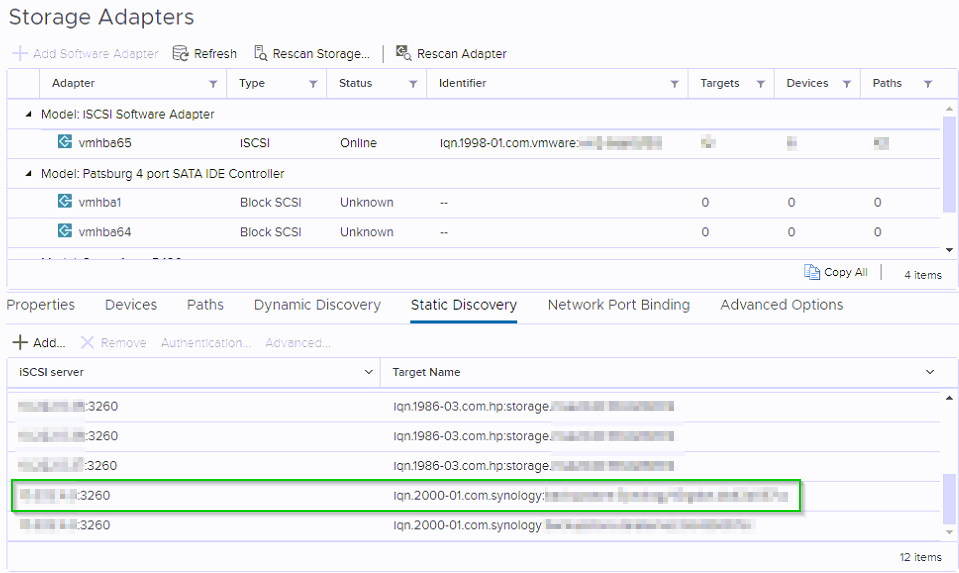

Also from a connectivity standpoint from the ESXi hosts, it also looked fine. The iSCSI storage adapter still had the static discovery iSCSI server details.

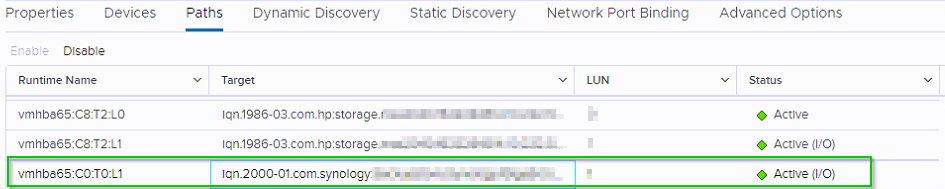

And there was an active path to the storage.

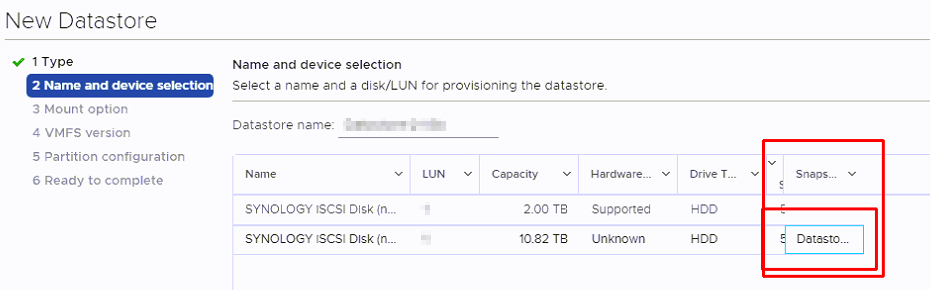

So why no datastore? I went to the ESXi host to add more storage and saw the available LUN to create a new datastore from but noticed in the Snapshot column the old name of the datastore. It saw the VMFS volume! There is hope yet. I certainly did not create a snapshot on the LUN, but VMware believes there is one.

I found this KB https://kb.vmware.com/s/article/2129058 which refers to a vSAN incorrectly identifying devices as having snapshots. While similar, I thought I would give it go.

Synology NAS Troubleshooting Begins

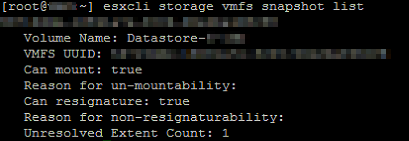

I ran this command:

esxcli storage vmfs snapshot list

And it did show a snapshot for this volume:

Per the KB article I then ran this command:

esxcfg-advcfg -s 0 /LVM/DisallowSnapshotLun

Which returned:

Value of DisallowSnapshotLun is 0

After which I issued commands to rescan the storage:

esxcli storage core adapter rescan --all

vmkfstools -V

Afterwards running the first command again showed no results.

esxcli storage vmfs snapshot list

I was hopeful that the datastores would load and they did not. I looked at the logs with this command:

cat /var/log/vmkernel.log | less

It showed this error:

“Denying reservation access on an ATS-only vol.”

This is where VMware support came into the picture. It took 5 different VMware techs but I got one that knew the technology enough about ATS.

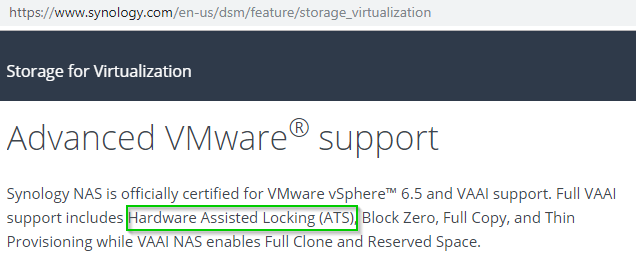

Let’s take a quick tangent on ATS. Before this I didn’t know what ATS was and chances are you don’t either. You can set up new iSCSI LUNs as datastores in VMware for years and years and not know what ATS is. ATS stands for atomic test and set locking. When multiple hosts connect to the same iSCSI storage, there is locking in place so that data corruption doesn’t occur. ATS is a more advanced locking than the standard SCSI locking that just about every device would support. According to Synology’s website, their NAS units support it:

After a call into Synology support asking them to confirm that this Synology unit supported ATS, I was greeted with the response of “what’s that?” Well, at least I’m glad I’m not the only one who was unaware of what ATS is. After the tech looked through some documentation, he found that only their newer units that end in “+” or “sx” support ATS. Our unit did end in a + but being a few years old, did not support ATS.

Back to troubleshooting…

The logs were showing that while the connectivity was there, the ESXi servers were not allowing the Datastore to mount because it believed the datastore was set to ATS only mode.

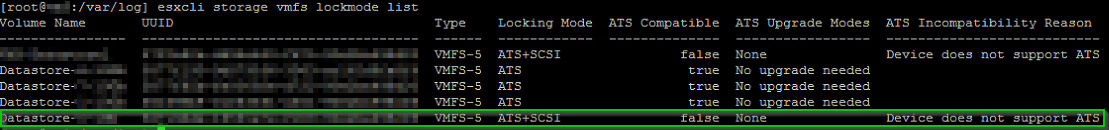

However, when looking at the list of storage, it was clear that it was in the ATS+SCSI locking mode.

esxcli storage vmfs lockmode list

Well, one of those is wrong. Believing the log’s reason for not allowing the datastore to be mounted, I found this KB article that references how to change it to ATS+SCSI.

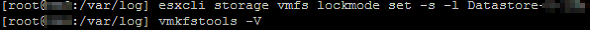

Running this command will change it and then rescan the storage:

esxcli storage vmfs lockmode set -s -l <datastore name>

vmkfstools -V

It did not change anything as it appeared to already be in ATS+SCSI mode.

The next troubleshooting step took us to putting the host into SCSI mode only and to not use ATS. This could be dangerous as defined here: https://kb.vmware.com/s/article/2094604.

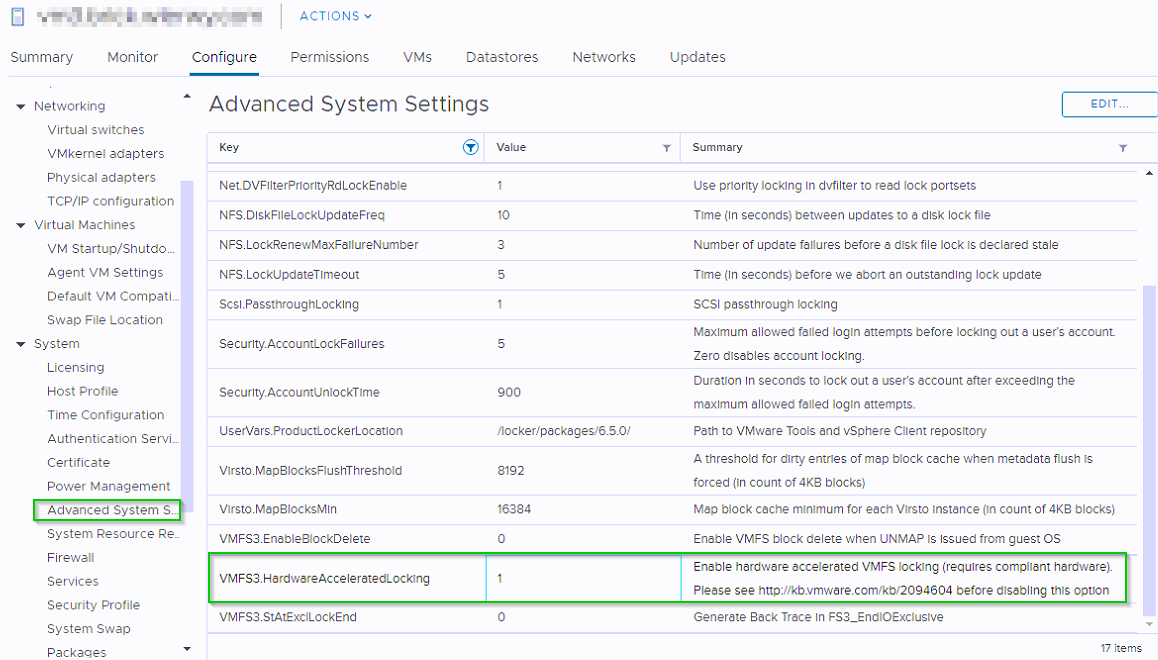

If one host is using SCSI and another is using ATS, that could lead to data corruption. The safest bet would be to vMotion the VMs running on one host to another, putting the host in maintenance mode and experimenting with just the one host not actively using the other datastores. After the host was put in maintenance mode, the host was changed to not use ATS by changing the VMFS3.HardwareAcceleratedLocking advanced system setting to 0. This is found in Configure > System > Advanced System Settings. Once it is turned off with a 0 for the value, a rescan of the storage mounted the datastore!

Note: while ATS compatible datastores prefer to mount in ATS locking mode, they will connect in SCSI locking mode if that is all that is available to use.

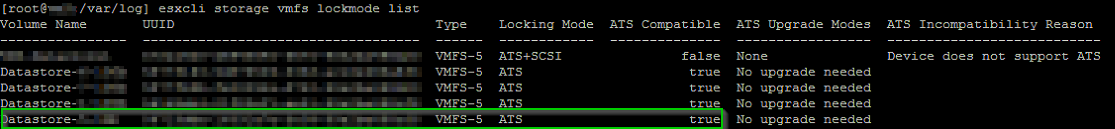

The next step was to change the value back to 1 to allow the ATS locking mode (VMFS3.HardwareAcceleratedLocking). After doing so, the datastore dropped again. It really must be set for ATS only. Change the value back to 0. We checked again with:

esxcli storage vmfs lockmode list

The datastore is reporting as ATS compatible true even though previously it was reporting as false.

I found this KB article that references how to change it to ATS+SCSI.

Running this command will change it and then rescan the storage:

esxcli storage vmfs lockmode set -s -l <datastore name>

vmkfstools -V

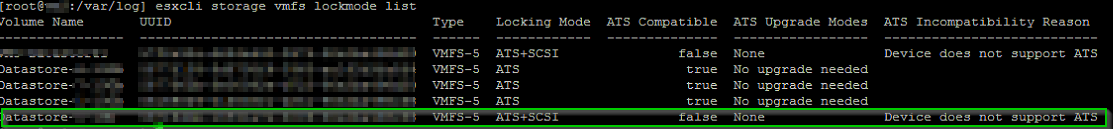

And now the Datastore is finally showing the correct ATS compatibility:

esxcli storage vmfs lockmode list

After the datastore was set correctly the last thing to do would be to change VMFS3.HardwareAcceleratedLocking back to 1.

The datastore remained connected and the long story of a failed Synology unit that doesn’t support ATS with an iSCSI LUN being used as a VMware datastore that was flagged to use ATS came to a close. The host was taken out of maintenance mode and the VMs were rebalanced. The backup server that was running on the host was registered to the cluster again and powered on just fine.

It took 5 different VMware storage techs in severity 2 and 1 levels and my own searching to find the answer, but now I will never forget it.

Have any questions regarding Synology NAS troubleshooting or VMware troubleshooting in general? Contact us at any time.

This publication contains general information only and Sikich is not, by means of this publication, rendering accounting, business, financial, investment, legal, tax, or any other professional advice or services. This publication is not a substitute for such professional advice or services, nor should you use it as a basis for any decision, action or omission that may affect you or your business. Before making any decision, taking any action or omitting an action that may affect you or your business, you should consult a qualified professional advisor. In addition, this publication may contain certain content generated by an artificial intelligence (AI) language model. You acknowledge that Sikich shall not be responsible for any loss sustained by you or any person who relies on this publication.